AI transformation is no longer primarily a technology challenge. It is a governance challenge.

Enterprises can now build AI pilots faster than they can safely scale, monitor, and control them. The real barrier is no longer access to AI models — it is accountability, risk management, decision rights, compliance, and trust.

For the past several years, enterprise AI adoption has been treated as a technology race. Organizations invested heavily in large language models, cloud infrastructure, data platforms, AI copilots, automation tools, and machine learning talent. The assumption was straightforward: once the right models and tools were in place, business transformation would follow.

That assumption has proven wrong.

In 2026, many enterprises are stalling not because they lack AI tools, but because they lack the operating model required to govern AI at scale. They build pilots but struggle to move them into production. They deploy copilots but cannot consistently measure their value. They give employees AI access but cannot always control how sensitive data is used. They automate workflows but cannot adequately explain who is accountable when something goes wrong.

This is the new governance ceiling.

Deloitte’s 2026 enterprise AI research highlights the scale of the challenge. Companies have expanded worker access to AI, but only 25% had moved 40% or more of their AI experiments into production, while 54% expected to reach that threshold within six months. That gap illustrates the difference between AI access and AI transformation. Giving employees AI tools is not the same as redesigning the business around AI.

McKinsey’s 2025 global AI research reaches a parallel conclusion. Companies capturing value from AI are not simply adopting more tools — they are redesigning workflows, operating models, governance practices, data foundations, and adoption mechanisms around the technology. McKinsey also found that CEO oversight of AI governance was among the elements most strongly correlated with higher self-reported bottom-line impact from generative AI.

The implication for CTOs, CIOs, CISOs, Chief AI Officers, and board-level technology leaders is clear: enterprise AI transformation is no longer a question of whether the technology works. It is a question of whether the organization can govern it.

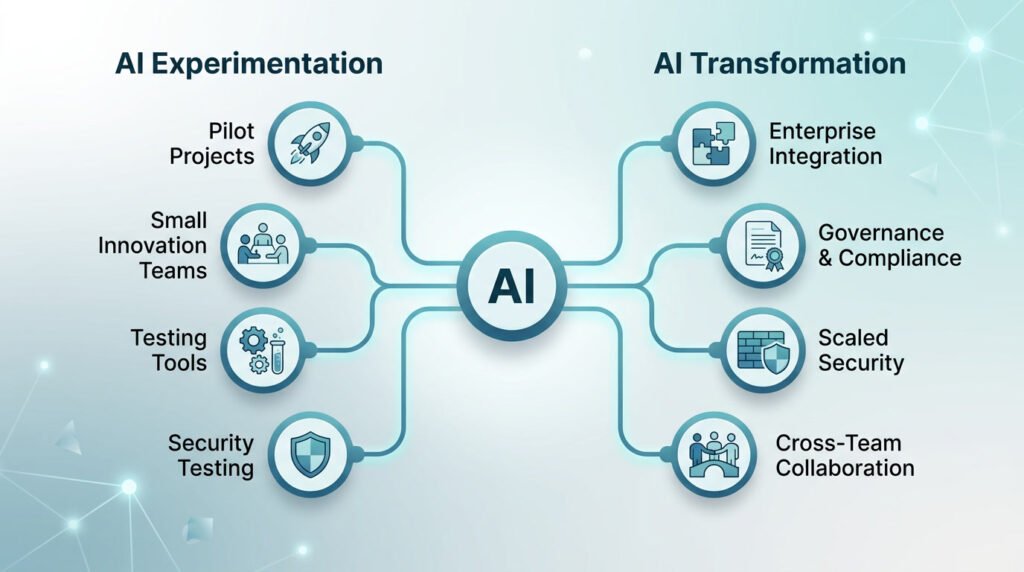

AI Experimentation vs. AI Transformation

Most enterprises have experimented with AI. Far fewer have transformed with it.

| Area | AI Experimentation | AI Transformation |

| Primary goal | Test what AI can do | Redesign how work gets done |

| Ownership | Innovation team, IT, or data science | Business, technology, risk, and executive leadership |

| Governance | Informal review or one-time approval | Continuous oversight across the AI lifecycle |

| Risk management | Handled case by case | Built into policies, platforms, workflows, and controls |

| Data usage | Often inconsistent or unclear | Governed through classification, access control, and auditability |

| Success metric | Number of pilots or use cases | Business value, risk reduction, productivity, quality, and adoption |

| Accountability | Often unclear | Named business and technical owners |

| Scaling model | Fragmented tools and teams | Enterprise-wide operating model |

The distinction matters because AI pilots can generate excitement without delivering durable value. A chatbot proof of concept may impress leadership in a demo, but production AI must survive security reviews, legal scrutiny, regulatory expectations, employee adoption, board oversight, cost analysis, and operational stress.

Scalable AI transformation depends on structured governance — not just capable models.

The Governance Ceiling: Why AI Pilots Stall

The governance ceiling appears when an enterprise has enough AI capability to innovate but lacks the oversight infrastructure to scale.

At the pilot stage, teams move quickly. They test models, run experiments, build internal copilots, and prototype agentic workflows. But once AI begins touching customer experiences, employee decisions, regulated data, financial analysis, legal review, cybersecurity, hiring, lending, procurement, or supply chain operations, the questions become significantly more complex:

- Who approved the use case?

- Which model is being used?

- What data does it access?

- Is customer or employee data exposed?

- Can the model output be audited?

- What happens if the model is wrong?

- Who signs off on automated decisions?

- How is performance monitored after deployment?

- When should a system be paused, escalated, or retired?

These are governance questions — not model selection questions.

The rise of agentic AI makes this more urgent. AI systems are evolving from passive assistants into active participants that can execute workflows, utilize enterprise context, interact with business systems, and take action within defined permissions. As AI gains broader access to internal tools and knowledge, oversight must become continuous, enforceable, and operationally embedded.

Why Governance Matters More Than Ever

AI governance is frequently misunderstood as a compliance function. In reality, it is the operating system for enterprise AI.

It defines who can use AI, which tools are approved, what data can be accessed, which use cases require review, who owns outputs, how risks are monitored, and how value is measured.

Without governance, AI adoption becomes fragmented. Teams choose different tools independently. Employees use public AI systems without approval. Sensitive data moves through unmonitored channels. Business units launch overlapping pilots. Legal and security teams are engaged too late. Leaders struggle to explain where AI is deployed and what it is producing.

Structure solves these problems.

Strong governance does not slow innovation — it accelerates adoption. When teams understand the rules before they begin, they move with greater speed and confidence. A clear governance model gives employees approved tools, gives business leaders defined decision rights, gives security teams visibility, and gives executives the confidence that AI can scale responsibly.

Poorly governed AI creates hesitation. Well-governed AI creates speed.

Risk Management: AI Expands the Enterprise Risk Surface

AI risk management is now one of the most critical components of enterprise transformation.

Traditional software risk centers on access, uptime, security vulnerabilities, data protection, and compliance. AI introduces a distinct category of risk: hallucination, model drift, bias, prompt injection, unauthorized data exposure, intellectual property leakage, overreliance on automated outputs, explainability gaps, vendor dependency, and uncontrolled employee usage.

The National Institute of Standards and Technology’s AI Risk Management Framework is one of the most practical references available to enterprise leaders. NIST developed the framework to help organizations manage risks to individuals, organizations, and society from AI systems. The AI RMF is organized around four core functions: govern, map, measure, and manage.

A one-time approval process is insufficient for AI systems that change over time. AI applications depend on shifting prompts, evolving retrieval sources, updated models, changing user behavior, and new security threats. A system that performs well in a controlled test environment can behave differently under production conditions.

Modern AI risk management should include:

- AI use-case classification

- Model and vendor risk assessment

- Data classification and access controls

- Human-in-the-loop requirements

- Red teaming and adversarial testing

- Bias and fairness evaluation

- Prompt injection and data leakage testing

- Audit logs and explainability requirements

- Incident response procedures

- Ongoing performance monitoring

Risk management should not block AI adoption. It should make AI safe enough to scale. The enterprises that treat governance as a deployment accelerator — rather than a compliance burden — will move faster, with less uncertainty, and with greater organizational confidence.

The Shadow AI Problem

One of the most serious challenges facing technology leaders is shadow AI.

Shadow AI occurs when employees use unauthorized AI tools outside official IT, security, procurement, or compliance channels. They may paste confidential documents into consumer AI systems, use unapproved chatbots to analyze customer data, generate code through tools that have not been security-reviewed, or build AI-driven workflows without auditability.

This creates a dangerous visibility gap. An organization cannot govern tools it does not know exist.

Gartner has warned that shadow AI is becoming a critical enterprise risk. In November 2025, Gartner predicted that by 2030, more than 40% of enterprises would experience security or compliance incidents linked to unauthorized shadow AI. Gartner also reported that 69% of surveyed organizations suspected or had evidence that employees were actively using prohibited public generative AI tools.

Shadow AI is rarely just an employee discipline issue. It is almost always a symptom of weak governance design.

Employees turn to unauthorized tools because approved alternatives are unavailable, too restrictive, poorly integrated, or too slow for daily work. When policy only says “no,” employees find workarounds. When governance provides approved, secure, and genuinely useful alternatives, compliance follows.

The answer is not a blanket ban. It is building a practical enterprise AI environment where employees can use AI safely within clear boundaries.

A strong shadow AI response should include:

- An approved AI tools list

- A clear prohibited AI usage policy

- Browser, SaaS, and network-level visibility

- Robust data loss prevention controls

- Role-based employee training

- Routine, automated audits of AI usage

- Streamlined procurement reviews for AI vendors

- Safe internal AI assistants for common workflows

The governance goal is visibility first, control second, and trusted adoption third.

Decision-Making: Clarifying Who Decides

AI transformation frequently breaks down because decision-making authority is unclear.

In many organizations, AI starts inside technical teams. Data scientists, engineers, IT staff, or innovation labs build the early use cases. That works during experimentation. It does not work for enterprise-wide transformation.

AI decisions are rarely purely technical. A model may be accurate but legally risky. A workflow may be efficient but unacceptable from a customer trust perspective. A chatbot may reduce service costs but damage brand credibility if it provides misleading answers. An AI agent may boost productivity but create serious security exposure if granted broad access to internal systems.

Effective governance must explicitly define decision rights across three levels.

1. Strategic Decisions

These belong to the CEO, board of directors, CIO, CTO, CISO, Chief Data Officer, Chief AI Officer, legal leadership, and senior business executives.

Strategic decisions include:

- AI investment priorities

- Overall risk appetite

- High-impact use-case approval

- Enterprise-wide AI policy

- Build-versus-buy strategy

- Regulatory posture

- Board-level reporting

- AI operating model design

2. Operational Decisions

These belong to cross-functional governance groups and business leaders.

Operational decisions include:

- Use-case risk classification

- Data access approval

- Vendor review

- Model validation requirements

- Human review requirements

- Deployment readiness

- Monitoring thresholds

- Escalation procedures

3. Execution Decisions

These belong to technology, security, data, product, and operations teams.

Execution decisions include:

- Model implementation

- Prompt and retrieval design

- Monitoring configuration

- Access control enforcement

- Testing and validation

- System documentation

- Incident handling

- Workflow integration

The goal is not bureaucracy. The goal is clarity. AI transformation moves faster when teams know exactly who holds the authority to decide.

Accountability: The Missing Link

Accountability is the foundation of enterprise AI governance.

Every AI system should have a named owner. Every high-risk use case should have a defined approval path. Every AI-driven workflow should have someone explicitly responsible for performance, risk, and business outcomes.

Without accountability, governance becomes documentation without control.

Many organizations make the mistake of assigning AI accountability entirely to IT. Technology teams should own platforms, access controls, security, integration, model operations, and technical reliability. But business leaders must own the outcomes AI produces within their functions.

If AI is used in sales forecasting, the sales organization owns the decision process. If AI handles contract review, legal leadership owns the workflow. If AI assists in hiring, HR owns the impact. If AI powers customer support, customer operations owns service quality and escalation.

The technology organization enables the system. The business owns the decision.

This matters because AI does not merely produce outputs — it changes how work is performed. It influences judgment, prioritization, productivity, customer interactions, employee behavior, and operational risk.

A mature accountability model includes:

- Executive sponsor

- Business owner

- Technical owner

- Data owner

- Risk or compliance reviewer

- Security reviewer

- Human escalation owner

- Incident response owner

For high-impact systems, accountability must be visible to the board. Executives do not need to understand every prompt or model parameter, but they must know where AI is deployed, what risks it carries, what value it produces, and who is responsible.

This is one reason the Chief AI Officer role is gaining traction. The title matters less than the function. Enterprises need a senior leader who can bridge AI strategy, governance, risk, technology, adoption, and measurable business value — and be accountable for all of it.

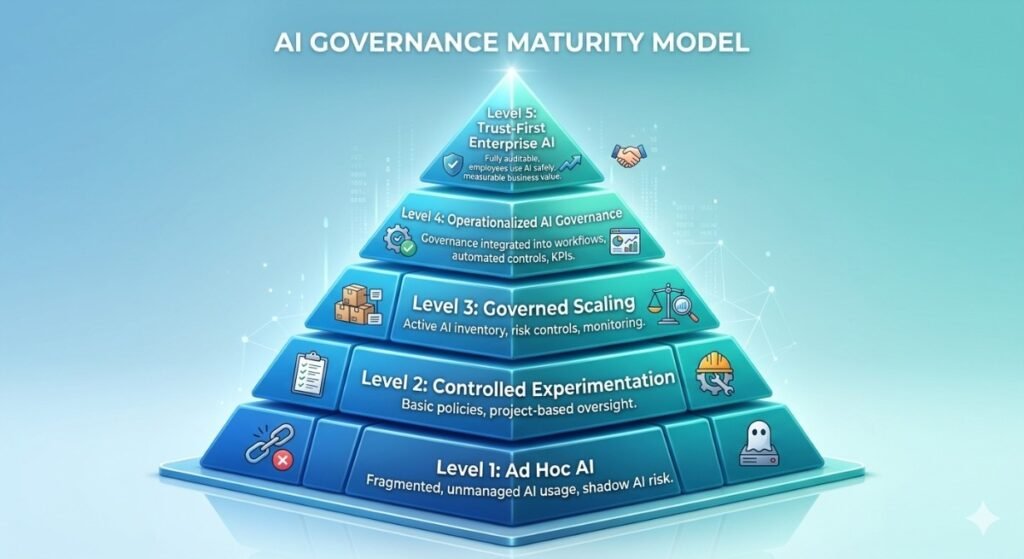

The AI Insider Governance Maturity Model

To move beyond scattered pilots, technology leaders need a reliable way to assess governance maturity. The following model helps CTOs and executive teams benchmark where they are and identify what to build next.

| Level | Description | Main Risk |

| Level 1: Ad Hoc AI | AI usage is informal, fragmented, and largely unmanaged. Employees use public tools independently, and leadership has little visibility into data exposure or business impact. Oversight is reactive. | Shadow AI and uncontrolled data leakage. |

| Level 2: Controlled Experimentation | Basic policies and approved tools exist, but governance remains project-based. Some use cases are reviewed, but ownership and monitoring are inconsistent across the enterprise. | Pilots fail to scale due to disjointed controls. |

| Level 3: Governed Scaling | An active AI inventory, use-case classification, approval workflows, named owners, and risk controls are in place. Systems are monitored post-deployment. Oversight is embedded in product, data, security, and business processes. | Governance exists but may still be too manual or slow. |

| Level 4: Operationalized AI Governance | Governance is integrated into platforms, workflows, procurement, security operations, and board reporting. Controls are automated where possible. AI performance ties directly to operational KPIs. | Maintaining consistency across business units and geographies. |

| Level 5: Trust-First AI Enterprise | AI governance is a competitive advantage. The organization deploys AI faster because trust, accountability, risk controls, and monitoring are built into the core operating model. Employees know how to use AI safely. Leaders can measure value. Regulators and customers trust the organization’s AI practices. | Sustaining speed without sacrificing rigor as the organization scales. |

The Four Pillars of Enterprise AI Governance

A practical enterprise AI governance framework rests on four pillars.

| Pillar | Core Question | What It Requires |

| Accountability | Who owns AI decisions and outcomes? | Named owners, executive sponsorship, escalation paths |

| Policy and Standards | What AI usage is permitted? | Data rules, use-case policies, model standards, vendor requirements |

| Risk and Compliance | How are AI risks identified and controlled? | Testing, monitoring, red teaming, audit logs, legal review |

| Performance Oversight | Is AI creating measurable value? | KPIs, adoption metrics, workflow impact, board reporting |

These pillars cannot live in a static policy document. They must be embedded into the enterprise operating model — present in procurement decisions, software development lifecycles, data access approvals, employee training, incident response, and board reporting.

AI governance only works when it becomes operational.

CTO Checklist: How to Govern AI Transformation

For CTOs and technology leaders, the immediate priority is moving from informal AI adoption to governed, scalable deployment.

Use this checklist as a starting point.

AI Visibility

- Do we maintain an active, updated inventory of all approved AI tools and use cases?

- Do we know where employees are currently using unauthorized AI tools?

- Can we identify which models, vendors, and data sources are involved?

- Have we implemented continuous AI inventory tracking to detect new deployments in real time?

- Have we deployed shadow AI detection tools across our network and endpoint environments?

Risk Management

- Have we formally classified all AI use cases by risk level?

- Do high-impact use cases automatically trigger additional mandatory reviews?

- Do we test for hallucination, bias, prompt injection, data leakage, and model drift?

- Have we established automated compliance monitoring to keep pace with evolving regulatory requirements?

Data Governance

- Are sensitive data types restricted from unapproved AI systems?

- Do we know which AI tools can access customer, employee, financial, or proprietary data?

- Are data retention and model training policies clearly defined in vendor contracts?

Decision-Making

- Who holds the authority to approve new AI use cases?

- Which decisions require legal, security, compliance, or board review?

- Under what conditions must a human remain in the loop?

Accountability

- Does every deployed AI system have a named business owner and technical owner?

- Who is responsible when an AI output causes harm, brand damage, or financial error?

- Who monitors system performance after deployment?

Performance Oversight

- Are AI systems tied to measurable business KPIs?

- Do we measure productivity, quality, cost reduction, revenue impact, risk reduction, or customer experience?

- Do we have the discipline to retire AI systems that fail to create value?

Trust and Adoption

- Are employees trained on approved AI usage?

- Do teams have access to safe internal tools capable of replacing shadow AI?

- Can senior leaders explain the organization’s AI governance model clearly?

If the answer to most of these questions is no, the organization is not ready for AI transformation at scale. It may be ready for experimentation — but not for governed, enterprise-wide deployment.

Why Trust Is Becoming a Competitive Advantage

Many executives still treat AI governance as a defensive requirement — something associated with compliance checklists, legal review, and risk reduction.

That view is too narrow.

Governance is becoming a speed advantage.

Organizations with weak governance move slowly because every AI use case triggers a custom debate. Teams do not know who approves what. Legal and security are pulled in at the last hour. Data policies are ambiguous. Board reporting is inconsistent. AI adoption becomes fragmented and politically exhausting.

Organizations with strong governance move faster because the rules are already established. Approved tools are known. Risk thresholds are defined. Use-case pathways are repeatable. Monitoring is built in. Accountability is assigned. Employees have capable, sanctioned options. Leaders trust the process.

Trust-first AI organizations do not ask only, “Are we compliant?”

They ask, “Do we have enough confidence in our governance model to deploy AI into the highest-value parts of our business?”

That is the real transformation question.

Sources and Further Reading

For readers who want to explore the governance, risk, and enterprise AI research behind this analysis, these resources are useful starting points:

- McKinsey — The State of AI: How Organizations Are Rewiring to Capture Value

Useful for understanding why workflow redesign, senior leadership involvement, and operating model changes are central to AI value creation. - NIST — AI Risk Management Framework

A foundational reference for managing AI risk through the govern, map, measure, and manage functions. - Gartner — Critical GenAI Blind Spots CIOs Must Address

Helpful for understanding enterprise security and compliance risks associated with shadow AI. - Deloitte — State of AI in the Enterprise 2026

Covers global AI investment trends, adoption challenges, scaling barriers, and business impact measurement.

Conclusion: The Next AI Winners Will Govern Better

The next phase of enterprise AI will not be won by the organizations with the most pilots. It will be won by the organizations that can scale AI with trust, accountability, and measurable business value.

The technology is powerful. The models are improving rapidly. The tools are widely accessible. But enterprise transformation depends on something harder to acquire than access: the organizational discipline to govern AI at scale.

CTOs, CIOs, and technology leaders must help their organizations answer the questions that determine whether AI can grow safely:

- Who owns AI outcomes?

- Which use cases warrant scaling?

- What data can AI systems access?

- How are risks continuously monitored?

- How are decisions reviewed?

- How is value measured?

- How is trust earned?

The enterprises that answer these questions will move beyond experimentation. They will transform AI from a collection of promising tools into a governed, scalable business capability.

The enterprises that do not will remain beneath the governance ceiling — managing pilots, making promises, but achieving limited transformation.

In 2026, AI transformation is not a model problem. It is not a tooling problem. It is not a data problem.

It is a governance problem.

And the organizations that govern AI best will be the organizations that scale it best.

Subscribe to The AI Insider

Stay ahead of the evolving enterprise AI landscape by subscribing to The AI Insider newsletter. Get weekly analysis on AI strategy, governance, risk management, and scalable transformation — written for technology leaders who need practical insight, not hype.

Key Takeaways

- Enterprise AI transformation is limited more by governance than by technology.

- Shadow AI is a serious enterprise risk because it creates invisible data and compliance exposure.

- Effective AI governance must incorporate risk management, decision rights, accountability, and performance oversight.

- CTOs must build AI inventories, classify use cases, assign owners, and monitor systems continuously.

- Trust-first governance accelerates AI adoption — it does not slow it down.

Frequently Asked Questions (FAQs)

What is AI transformation governance?

AI transformation governance is the comprehensive set of policies, roles, controls, decision rights, monitoring systems, and accountability structures that allow an organization to scale AI safely and effectively.

What is shadow AI?

Shadow AI is the use of unauthorized AI tools by employees outside official IT, security, or compliance oversight. It creates risks involving data leakage, intellectual property exposure, regulatory violations, and a lack of auditability.

Who should own AI governance?

AI governance should be owned jointly by executive leadership, technology officers, risk and compliance teams, security directors, data leaders, and business unit heads. A senior executive sponsor must ensure governance is connected to business strategy.

What role should CTOs and CIOs play in AI governance?

Technology leaders must define the AI platform strategy, design the security architecture, enforce data controls, implement monitoring systems, build approval workflows, and establish the technical standards required to scale AI responsibly.

What are the four pillars of AI governance?

The four pillars of AI governance are accountability, policy and standards, risk and compliance, and performance oversight.

How can enterprises reduce AI risk?

Enterprises can reduce AI risk by maintaining a continuous AI inventory, classifying use cases by risk level, restricting sensitive data access, monitoring model performance after deployment, testing for security vulnerabilities, assigning named owners, and training employees on approved usage.

How does AI governance improve business value?

Governance makes AI systems safer, easier to trust, easier to scale, and easier to measure. This enables organizations to move from isolated pilots to repeatable, enterprise-wide adoption.